We write about innovation and new technologies

In our blog, you will find insights into what we are building, the technology we use, and what we do on a day-to-day basis.

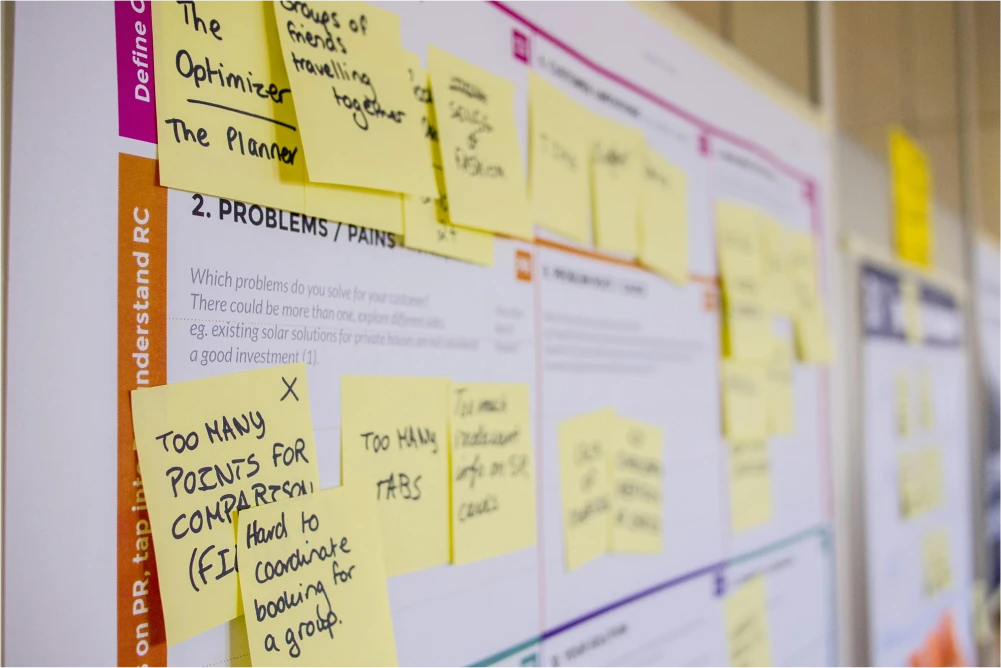

Enabling Sustainable Failure

A venture builder's take on the the "Why" and "How" to fail quickly for traditional companies.

read the ARTICLE →Four relevant problem spaces in carbon capture projects

The results of our carbon capturing market analysis indicate four relevant challenges, companies have to overcome

Stryza Interview

In this interview, we speak with Max Steinhoff, founder of Stryza, a venture within wattx. Max discusses his experiences at wattx, its influence on launching Stryza, the future of Stryza and wattx's role in fostering innovation for German SMEs.

Hacking Construction

During wattx' first fully remote Hackathon we organized our team Busienss Development, Product and Data teams to work on different challenges in construction.

How ethical is Artificial Intelligence?

In the realm of autonomous driving, AI faces ethical dilemmas similar to redirecting a trolley. Should AI decide human survival?

How to Give Constructive Feedback Correctly

Giving feedback can be a burden for both: the giver and the receiver. But with no feedback, there can be no improvement. However, it’s more than that. Giving constructive feedback can lead to open and transparent communication between co-workers and can strengthen the positive working culture.

Future trends in the AR-based consumer goods industry

For AR to reach mainstream adoption, it requires a groundbreaking application, such as real-time navigation in intricate locations like shopping malls or train stations. Ultimately, the business case will be the determining factor.